Privacy-First AI: The Rise of Federated and Encrypted Learning

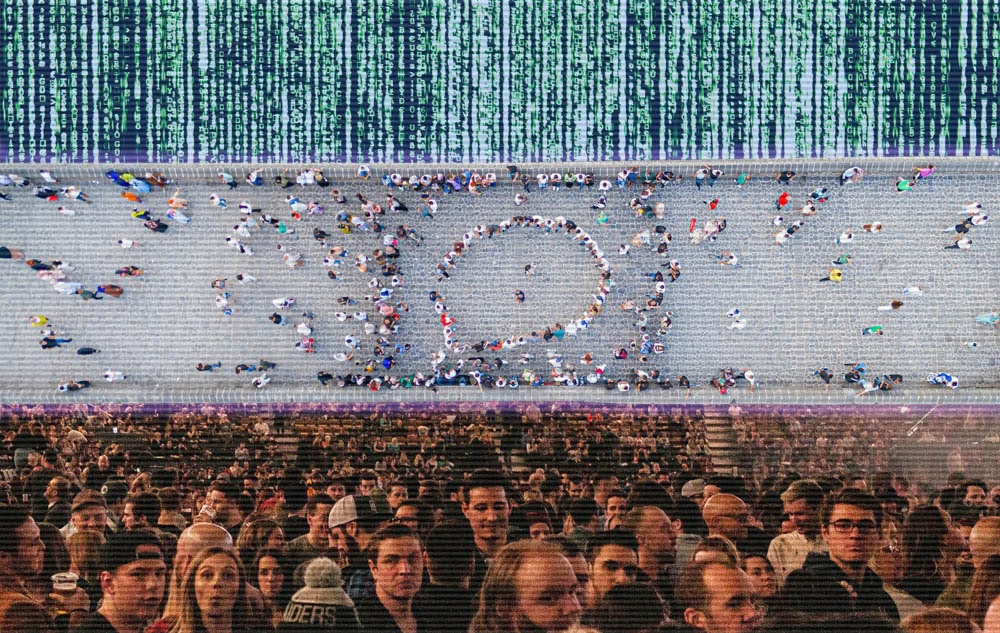

A few years ago, training an AI model felt like hosting an all-you-can-eat buffet. The more data piled onto the plate, the happier the algorithm seemed. Engineers collected logs, user clicks, purchase histories, and behavioral signals with enthusiasm. Storage was cheap. Compliance was an afterthought. Everyone went back for seconds.

Then the regulators showed up.

Suddenly, that generous buffet looked more like a liability. Data retention policies became urgent. Legal teams began asking uncomfortable questions. And executives discovered that “collect everything” is not a long-term AI strategy.

Now, as privacy laws tighten across the United States and globally, organizations must rethink how they train and test artificial intelligence systems. Generative AI in testing may promise automation and scale, but without privacy-preserving methods such as differential privacy and homomorphic encryption, modern AI architectures risk regulatory conflict and reputational damage.

Why Privacy-First AI Is No Longer Optional

The era of unconstrained data aggregation is coming to an end as governments around the world implement strict data protection frameworks such as the General Data Protection Regulation (GDPR) in Europe and the California Consumer Privacy Act (CCPA) in the United States. These laws fundamentally reshape how organizations collect, store, and use personal information, placing far greater emphasis on user rights and organizational accountability.

Under these regulations, companies must limit the data they gather to what is strictly necessary, obtain explicit consent from users, and maintain full transparency about how information is used. They are also required to comply with restrictions on transferring data across borders and honor user requests such as the right to have their data erased.

Traditional AI training pipelines - where data is centralized in cloud data lakes - can conflict with these principles. Centralization increases exposure risk, creates attractive targets for cyberattacks, and complicates compliance audits.

In response, organizations are turning toward privacy-preserving machine learning techniques that embed security directly into AI architecture rather than treating it as an afterthought.

What Is Privacy-First AI?

Privacy-first AI refers to machine learning systems designed to protect sensitive data at every stage of the model lifecycle - from collection to training, inference, and storage.

Rather than asking, “How much data can we gather?” the guiding question becomes, “How can we learn from data while minimizing exposure?”

Three core technologies define this approach:

Each addresses a different vulnerability in conventional AI pipelines.

Federated Learning: Training Without Centralizing Data

Federated learning fundamentally rethinks where model training occurs.

How Federated Learning Works

Instead of sending raw data to a central server, federated learning sends the model to the data.

Here is the simplified workflow:

- A global model is distributed to local devices or data silos.

- Each participant trains the model on its own data.

- Only model updates (not raw data) are sent back to a central server.

- The server aggregates updates to improve the global model.

This method was popularized by organizations like Google, particularly in applications such as predictive text and mobile personalization.

Business Impact of Federated Learning

Federated learning offers several strategic advantages:

- Reduced data transfer risk

- Improved regulatory compliance

- Enhanced user trust

- Lower central storage costs

For industries such as healthcare, finance, and telecommunications - where data sensitivity is high - federated learning enables cross-institutional collaboration without sharing raw datasets.

However, federated systems require sophisticated coordination, secure aggregation protocols, and robust infrastructure to manage distributed updates efficiently.

Differential Privacy: Mathematical Guarantees of Anonymity

While federated learning limits data movement, differential privacy protects data within the model itself.

What Is Differential Privacy?

Differential privacy introduces controlled noise into datasets or model outputs, ensuring that individual records cannot be reverse-engineered from trained models.

In practical terms, it provides a quantifiable privacy guarantee: the presence or absence of a single individual’s data does not significantly affect the model’s output.

This technique has been implemented by organizations such as Apple in telemetry analysis and by Microsoft in research initiatives.

Trade-Offs and Considerations

Differential privacy requires a careful balance between accuracy and protection. By design, it introduces statistical noise into datasets, which helps safeguard individual identities but inevitably reduces precision. The key variable in this process - often called the privacy budget - defines how much distortion is allowed and how much privacy is ultimately preserved.

For business leaders, this becomes a strategic decision rather than a purely technical one. Stronger privacy guarantees can meaningfully reduce model accuracy, while dialing back the noise may improve performance but heightens the risk of re‑identification. Navigating this tradeoff demands a clear understanding of both the organization’s risk tolerance and its performance requirements.

Successfully implementing differential privacy also depends on close collaboration across teams. Data scientists must work alongside security specialists and compliance officers to ensure that privacy techniques align with regulatory expectations, operational needs, and the organization’s broader data strategy.

Homomorphic Encryption: Computing on Encrypted Data

If federated learning protects data location and differential privacy protects outputs, homomorphic encryption protects data during computation.

Understanding Homomorphic Encryption

Homomorphic encryption allows algorithms to perform computations directly on encrypted data without decrypting it first.

In traditional systems:

- Data must be decrypted before processing.

- During processing, it becomes vulnerable. With homomorphic encryption:

- Data remains encrypted throughout computation.

- Results are decrypted only after processing is complete.

This capability dramatically reduces exposure risk in cloud-based AI environments.

Real-World Applications

Although computationally intensive, homomorphic encryption is gaining traction in sectors requiring extreme confidentiality, including banking and medical research.

Companies such as IBM and Intel have invested heavily in advancing practical implementations.

As performance improves, encrypted learning could become foundational to secure AI-as-a-service platforms.

Architectural Implications for AI Systems

The shift toward privacy-preserving machine learning significantly alters AI system design.

From Centralized to Distributed Architectures

Legacy AI systems often rely on centralized data lakes. Privacy-first AI encourages:

- Edge computing integration

- Distributed model training

- Secure aggregation servers

- Encrypted communication channels

This transition increases architectural complexity but enhances resilience and regulatory alignment.

Security by Design

Privacy‑first AI requires organizations to embed encryption, access control, and auditability directly into their infrastructure from the very beginning. Building systems this way forces teams to think about security as a foundational design principle rather than an afterthought, ensuring that sensitive data is protected throughout the entire lifecycle of an AI system.

To achieve this, organizations must evaluate the strength of their key management systems, the suitability of secure multi‑party computation protocols, and the governance policies that guide how models are updated over time. As these considerations become central to AI development, the discipline of AI engineering increasingly overlaps with - and in many cases depends on - the principles of modern cybersecurity architecture.

Compliance, Trust, and Competitive Advantage

Privacy-first AI is not solely a defensive measure. It can create strategic advantages.

Regulatory Readiness

Companies adopting federated learning and encrypted AI pipelines are better positioned to adapt to evolving privacy regulations.

Consumer Trust

In markets where data misuse scandals have eroded public confidence, demonstrating privacy-by-design practices can strengthen brand reputation.

Data Collaboration Opportunities

Privacy-preserving technologies enable partnerships that were previously infeasible due to legal constraints. Multiple organizations can train shared models without exposing proprietary datasets.

This collaborative potential may accelerate innovation across industries.

Challenges and Limitations

Despite its promise, privacy-first AI is not without obstacles.

Computational Overhead

Homomorphic encryption and secure aggregation protocols require significant processing power.

Model Complexity

Distributed systems introduce synchronization challenges and potential inconsistencies.

Skill Gaps

Implementing federated learning and differential privacy demands specialized expertise that many organizations currently lack.

Addressing these challenges requires investment in talent, infrastructure, and governance frameworks.

The Future of Privacy-Preserving Machine Learning

The trajectory of AI development suggests that privacy will become a baseline expectation rather than a differentiator.

Emerging trends include:

- Hybrid architectures combining federated learning with differential privacy

- Hardware-based secure enclaves

- Automated compliance auditing tools

- Privacy-aware AI model marketplaces

As privacy regulations continue evolving globally, organizations that embed encrypted learning and distributed training models into their AI infrastructure will likely adapt more effectively.

In the long term, privacy-first AI may become synonymous with responsible AI.

Conclusion: Designing AI for a Regulated World

The age of unrestricted data centralization is fading. Regulatory scrutiny, consumer expectations, and cybersecurity threats demand a new approach to AI development.

Federated learning reduces data exposure by keeping information local. Differential privacy introduces mathematical safeguards against re-identification. Homomorphic encryption enables secure computation without decryption.

Together, these technologies form the foundation of privacy-first AI architectures.

For business leaders and technology strategists, the path forward is clear: evaluate current AI pipelines, identify points of data vulnerability, and pilot privacy-preserving methods within controlled environments.

Rather than viewing privacy as a constraint, organizations should treat it as a design principle. In doing so, they will not only achieve compliance - but build AI systems that are secure, scalable, and worthy of user trust.

The next generation of artificial intelligence will not simply be smarter. It will be safer by design. If you want to learn more about how you can use AI systems to answer your business and development challenges, please contact us at ScreamingBox.

For more on this topic, check out our Podcast on AI and Cybersecurity.